Description

Pricing Details

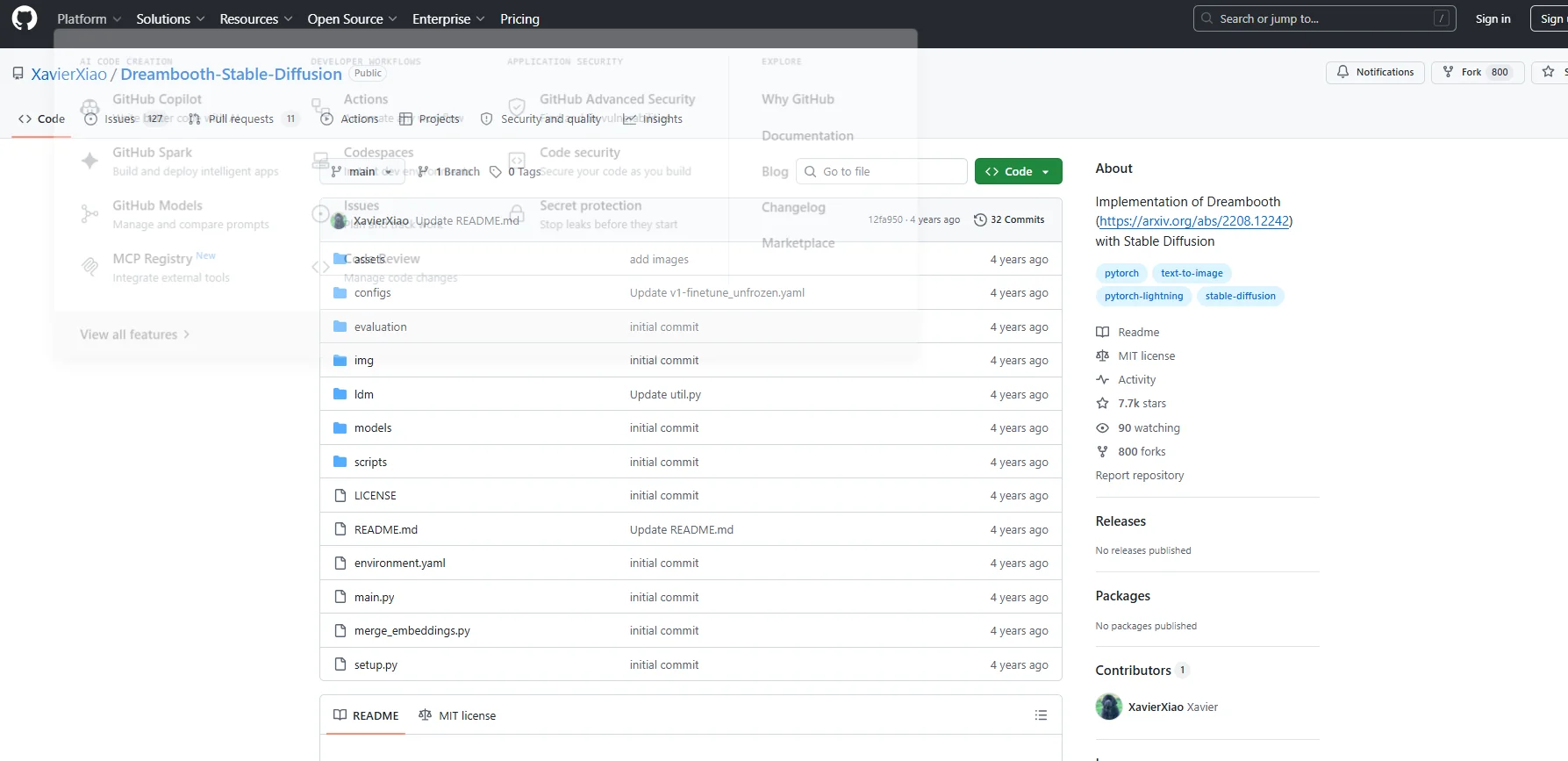

The free plan is based on the fact that the code is fully open source under the MIT license, which means it can be used without any fees or commercial restrictions; however, running or training it requires adequate computing resources. In practice, there are no official paid plans because the project is not a closed commercial product, but rather relies entirely on open-source architecture. As for the actual cost, it is tied to the computing power used rather than the subscription itself, as the system requires a powerful graphics card such as an RTX 3060 or higher, with VRAM ranging from 12 to 24 GB for smooth performance. The cost of local hardware can range from approximately $300 to over $1,500 depending on specifications, while using Cloud GPU services typically costs between $1.50 and $3 per hour. Training a single model can cost approximately $1 to $10 depending on data size and settings, with training times ranging from 10 minutes to an hour.